It's time for the regular person to start testing local AI models

Earlier in the year, I wrote a post on how local AI models (that run on your laptop, and not in the cloud) are an absolutely necessary insurance policy as things play out in the AI era, but they were pretty much out of reach for the average user. I define the average user as one with a modern laptop with 16-32 GB of RAM and basic built-in GPU (if any). I think it’s likely that this represents 99% of the AI user population (aka the silent majority), but the voices that dominate the entire discourse (superusers, tech bros with GPUs, and anyone with financial incentives within entire AI supply chain) comprise the elite 1% only.

Large language models are awesome, and I love using them for various use cases. But I also care about privacy, cost, and the longevity of my own critical thinking skills. I have been working on tracking how and when the average user can get their hands on AI models they can truly own, without fear of personal data being sent to third parties, the ability to tune the model if they choose to, and most importantly, not having to pay anything more on top of the devices they already have.

In this post, I’ll go through some of the reasons why I think it’s time for most people to start testing out local AI models for their personal use cases, and think about how they can reduce reliance on pricey, privacy-invasive third-party models, and find out when local models are “good enough” so you can use them to augment your brain without losing your own critical skills.

TL;DR

AI economics are changing and it’s getting pricey out there. Companies are jacking up subscription and token prices (doubling in some cases), often accompanied by quality and service degradation to manage the surge in demand.

Open source & fully local models from Gemma & Qwen released in April 2026 are surprisingly capable, which is giving me a lot of hope.

It’s the right time to start tracking local AI readiness across several use cases like agentic coding, personal knowledge management, chat & research. Everything should be running on your laptop and not on expensive external GPUs.

As a proof of concept, I did all the research for this post using locally running Gemma & Qwen models on my laptop. I’m working on more fully local & private use cases for personal & proactive agent concepts.

Next series of posts will cover some of these use cases and setup guides for you to try out these models without getting into the weeds.

AI economics: the status quo is breaking

I’ve had a very enjoyable ride using frontier models like Claude & ChatGPT for tons of use cases, especially with agentic coding to help me build some cool projects personally & professionally. But what I experienced in Nov 2025 - Feb 2026 is not true anymore at the end of April 2026.

At the start of the new year, a $20 plan from Claude and a $20 plan from ChatGPT (for Codex) was all I needed to build whatever I wanted — from my personal website to some cool gamified data science experiments here and here. In the early days, I had been using Claude & ChatGPT models for coding using the old copy-paste approach, but starting with Sonnet 4.5 (Sep 2025), I think we truly entered an agentic coding era, where copy-paste was no longer needed, and the models were able to build something that works pretty quickly inside of VS Code. It was still very sloppy, but Opus 4.5 (Nov 2025) took it to a new level of high quality and automated code generation that felt magical even to someone like me who’s been coding for almost 20 years now. At the same time, GPT 5.3 & 5.4 with Codex were pretty awesome too, only marginally behind Claude in the “fun” factor.

But since then, things have changed a LOT.

To begin with, I feel like I’m already reaching diminishing returns from using these overpowered tools for the work I need to do, the dopamine hits from watching terminal tik-tok is finally getting to me, I can’t keep up with all the features released every week, and amount of back-n-forth needed to get high quality output is exhausting, and reaching that point where I could just do it myself in the same amount of time. It’s time to zoom out and take a pause.

In the last couple of months, we’ve seen model degradations, price hikes, and really bad communication from the AI model providers. This information may be too niche for some of my readers, but the latest drama unfolded around Anthropic’s severe quality degradation with the Claude models.

I pulled up Anthropic’s April 23 postmortem (Source). They traced Claude Code quality degradation to three separate changes that shipped between March and April. I’ll summarize below if you’re interested in the deep technical details:

On March 4, they downgraded the default reasoning effort from

hightomedium, because the high-effort mode was freezing the UI for some users. Users noticed Claude felt dumber, but didn’t know why. Reverted April 7 after a lot of online backlash.On March 26, they shipped a caching optimization with a bug. After an idle session, Claude’s thinking history got wiped on every single turn instead of just once. Claude became forgetful and repetitive. They couldn’t reproduce the bug internally for over a week. Two unrelated experiments masked it. Fixed April 10.

On April 16, they added a system prompt telling Claude to “keep text between tool calls to ≤25 words” to cut down verbosity. That single prompt line hurt coding quality by ~3%. Reverted April 20.

I’m sharing all this just to show what’s happening behind the scenes, and probably is a glimpse into what’s coming next.

I totally understand that early, innovative tech will have bugs, and I’m not saying it all needs to be perfect. But AI providers market their products as if these are god-like magical tools, while the companies consuming them lay off workers saying they’ve been ‘replaced’ by AI. Neither of these narratives matches the reality at the ground level, where the experience has shifted from being user-centric to being company-centric.

And the pricing? I think it’s safe to say that what I’m getting out of a $100/month plan now is probably less than what I got from a $20 plan in Oct 2025. Other models like GLM, Kimi, Minimax, and of course GPT all either doubled their prices or nerfed the lower tiers, leaving the higher tier as the only option that delivers an equivalent experience. We have officially entered the enshittification stages of AI. And with every new model release, my excitement level is also reaching a diminishing return. Reddit & X posts also seem like they run content on a schedule. This meme below might seem familiar:

The Rise of Small, Capable Models

Earlier this year, when I wrote my first post about local AI models, I tried several small models from Qwen, Gemma, Mistral, Hermes, GLM, and others in the 8–30B parameter range. They all fit in memory for my 48GB M4 Pro Macbook, but they didn’t really “work”. The context windows were too small, there was barely any tool calling, no vision capabilities (you had to use separate models), and the quality was way off compared to Claude or Codex.

So I thought we’re probably years away from a “good enough” local setup, especially since I was NOT going to buy NVIDIA GPUs, and I advise my readers to not fall into that rabbithole. Leave that for the r/LocalLLama crowd, they’re ahead of the curve, but also deep into optimizing for the tiniest of details and building bigger and bigger GPU rigs to run very large models at home. All that is not for the average person.

Recent releases of some small, open-source models from Gemma (Google’s open-source division) and Qwen (Alibaba) have bridged that gap significantly. Specifically some of the releases below truly seem like the first time I can feel inching closer to a useful model setup.

Gemma 4 models (link):

Super small models: 2B and 4B effective parameter models, built for mobile and in-browser deployment. I’m excited to see these run on phones especially.

Medium-sized dense and MoE models: 31B (dense) and 26B (MoE). Both handle agentic coding and daily-driver work. The MoE variants require less memory.

Qwen 3.5 and 3.6 models:

Small: Qwen3.5-9B — runs comfortably on a laptop with 16 GB+ RAM.

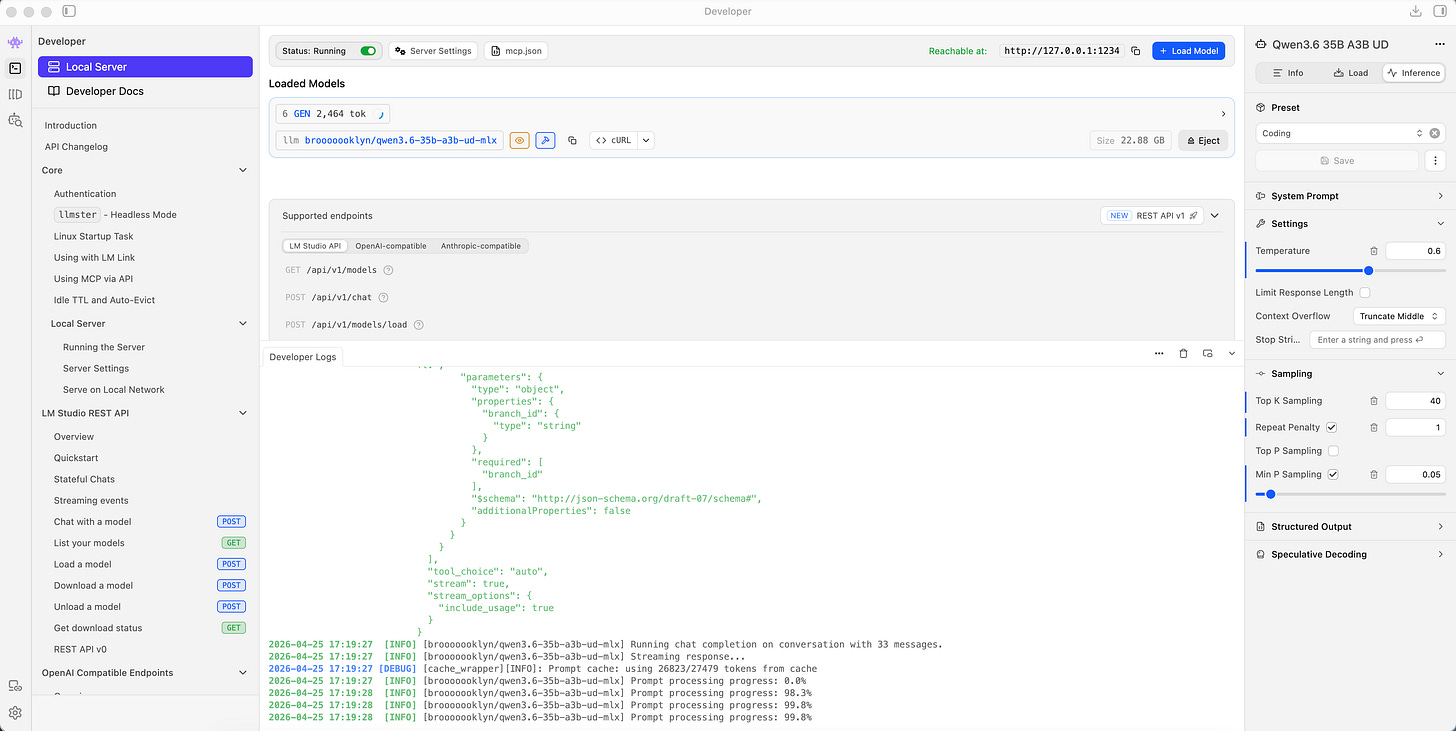

Medium: Qwen3.6 26B (dense) and Qwen 3.6 35B A3B (MoE) — excellent coding, reasoning, and tool-calling support.

They all offer reasoning, multimodality (text, image, audio, video), massive context windows (128K–256K), tool calling (critical for agentic coding), and system prompt support for tailoring model behavior. This was the missing piece for me a few months ago — where the earlier models felt like demos, and now they feel closer to Sonnet 4 or Sonnet 4.5 (especially the medium-sized models).

Pair up these open-source models with some open-source coding harnesses like Pi (my fav) or OpenCode (my 2nd fav), and you are living the open-source dream!

Use Cases: Privacy, Trust, and Zero Cost

For the average person, the motivations for moving to local AI models shouldn’t necessarily be about being “contrarian”. It should be about the fundamental value these tools actually provide:

Data Privacy: What companies already learn from your browsing activity and app usage — via cookies and device IDs — has been supercharged by AI-based data collection, because users literally reveal everything about themselves to these tools, willingly. (This is also one of the reasons why I never use Gemini or Meta AI models. I only use Gemma models from Google’s research division because they’re open-source and private.)

Trustworthiness: If you want, local models can be tuned to behave exactly the way you need them to. Hallucinations won’t be solved since they’re an inherent problem of LLM architecture, but you can make their behavior more predictable and won’t be beholden to an AI company’s policy changes.

Cost: Once you have the hardware, i.e., your laptop and phone, the marginal cost of inference is effectively zero.

What can you do with these models today:

Coding Assistance: Like I mentioned earlier, pair these models with coding tools like Pi or OpenCode, and you have the same Claude Code setup you’re used to.

Personal Knowledge Management (PKM): If your notes, files, and documents live on your laptop (I use Obsidian, for example), then all of that can be accessed by your local AI using tool calling now. And none of this sensitive, personal data is sent to 3rd party servers.

Personal Chat & Research Companion: Local models also have web search abilities, so everyday AI conversations can pretty much be replaced (probably the easiest use case).

Proof of Concept: I literally just used Gemma 4 26B A4B and Qwen 3.6 35B A3B running on my laptop using LMStudio + OpenCode to help me do some of the research for this post (the writing is entirely my own). They were fast enough, and the reasoning was sufficient for the initial drafting and doing some basic web searching. Could I have gotten better output from GPT 5.5 or Claude? Absolutely. But is this good enough? Yes, it is, and cost me nothing.

What’s Next

This is just the beginning of a series of posts I’m writing on local AI, now that some of the capabilities are getting better and models are getting small enough to fit on my laptop RAM. A few things on my mind below, which I hope helps out folks who are interested in trying some of this out on their own too:

Setup Guides: Step-by-step instructions for the “regular person” to get these models running on their laptop without going into the weeds. Coming up in the next post.

Local AI Readiness Benchmark: I’m building a lightweight benchmarking tool that others can run to assess how well current small models handle everyday use cases — coding, extraction, summarization — on typical laptops. This will help understand when YOUR use-cases (as opposed to cryptic benchmarks) are fulfilled by the local AI models.

Tuning for Trust: Researching methods to fine-tune or prompt small models specifically for low sycophancy, higher trust, and high privacy.

The “Proactive Agent” side quest: Moving toward proactive agents that can manage my email, calendar, and reminders, and eventually—my favorite—a proactive agent that works off my long-term goals and behaviors to assist me without being asked. If you’ve heard of OpenClaw, it’s the same idea, but I want to build a thin, tunable slice of that, while also exploring whether I could combine LLMs with traditional ML for some of this. This is ambitious, but could be a lot of good learning.

Thanks for reading if you’ve reached all the way down here. Please let me know your thoughts if any of this resonates with you, or if you have any ideas about what I should research or write about next.