The Dopamine Trap of AI Coding

This is a personal reflective essay about my experience with how AI coding tools hijack the “earned progress” dopamine loop, making “watching progress” feel like real work, pushing you away from true learning, mastery and building things that are useful. If you’re in engineering or data science, and have been immersing yourself in the latest AI tools or just drowning in all the noise, it’ll probably hit closest to home. If you’re not, just read it as a story about living through the AI era.

When things used to take time

I fell into programming when I couldn’t get into medical school (long story for another day). I struggled hard with it initially, but eventually it became a medium for self-expression for me, and something I still love as much as I love painting or sketching. It’s a creative pursuit after all, through and through. But, it used to take a very long time to make something useful.

Late 2000s. CS undergrad. Struggling through microprocessors, compiler construction, and projects like writing AES from scratch in C++. To finish any of these projects, it always took weeks of grinding through textbooks, online forums, searching on a brand-new StackOverflow (RIP), plus a lot of actual thinking about architecture, constraints, and the problem you were trying to solve.

Sure, even then you could call AES via a library in Java or Python. But the reason colleges put you through the torture to building things from scratch, was to train your brain to solve hard problems and use that training when you’d be in similar situations on the job, in the real world. The process was ugly. But when the code finally ran correctly and passed all the tests, it would feel like a real, earned victory.

I wasn’t always grinding on abstract CS problems. I was also learning by exploring and tinkering, which for me was the best way to build deep understanding in those formative years. The resources I had were far from ideal. My first laptop was a Lenovo with 256 MB of RAM (yes, megabytes), an Intel Pentium processor, and was barely good enough to run my usuals - C & C++ code, MATLAB, Age of Empires 2 (greatest game of all time), and Photoshop.

As I got deeper into the craft, I became a Linux enthusiast. At one point I had five different OSes in my GRUB menu — Windows, Ubuntu, Linux Mint, Kali, and Hackintosh (which barely worked). I was full-on FAFO mode in computer science.

All this to say, I love tinkering, trying out new tools, building random things, because it’s how I learn. But tinkering had an edge to it: you still had to make choices, understand tradeoffs, and get something working for it to be useful. But in 2026, things are different.

The AI acceleration

I was late to the AI “game”. Everyone in my social media echo chambers was posting about how they built this or that using Claude or Codex, and how everything was magical. I have an extremely busy job now, almost 80% leadership and management, which takes a huge mental toll. But I couldn’t resist. I had to go beyond “chatting” with AI or using it for “writing” (neither of which I ever enjoyed).

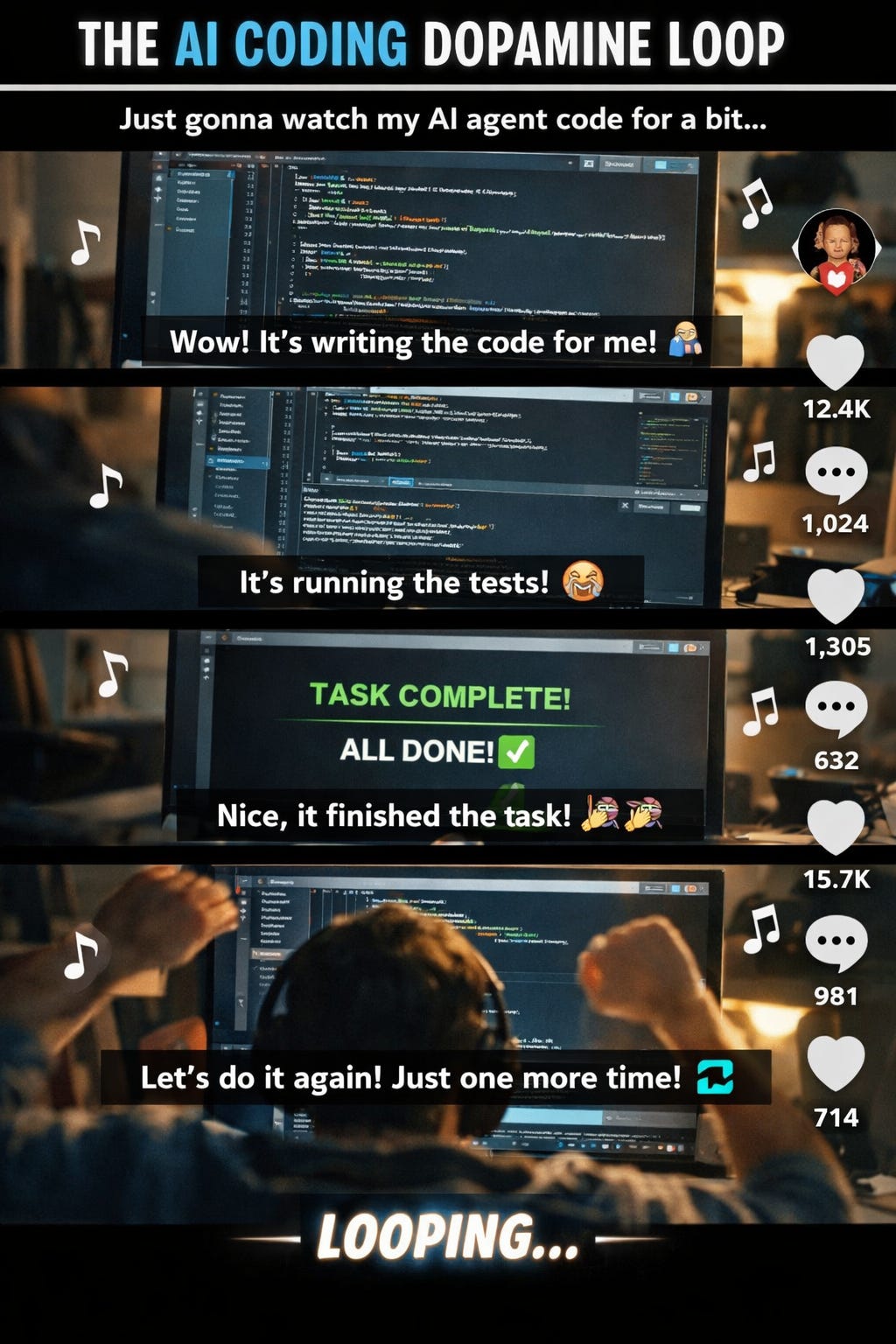

Soon enough, I learned that the barrier to over-engineering and tinkering has been demolished. You point Claude or Codex at a direction and it runs a million miles an hour, including twists and turns it “chooses” (hallucinates) along the way. It does all the work, but YOU still get the dopamine hit. It can feel surprisingly similar to the rush I used to get after weeks of struggle and hundreds of iterations of beating my head against the wall trying to solve pointers in C (final boss of programming). But it’s not the same thing. One is the reward for understanding and finishing. The other is the reward for watching momentum.

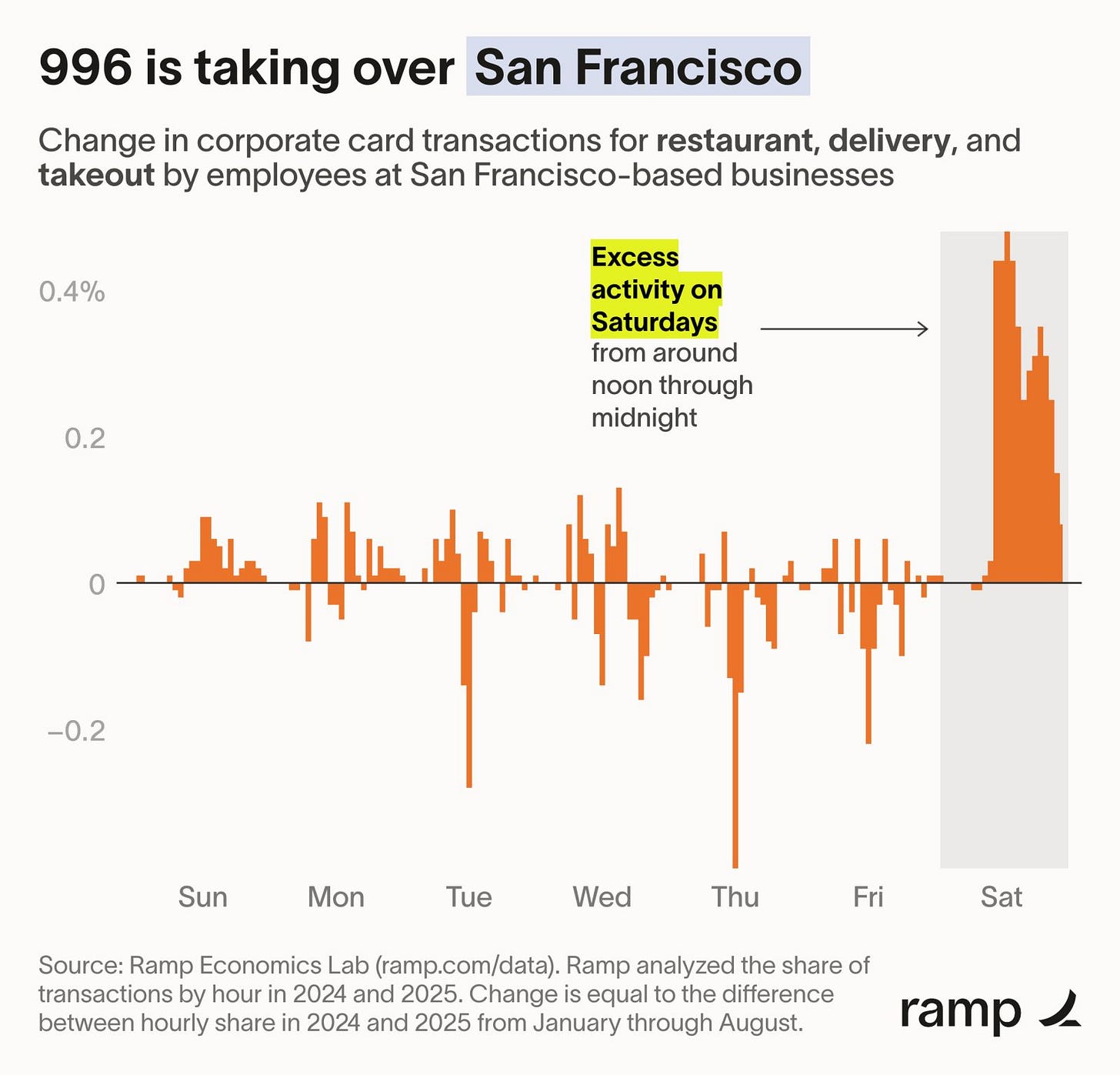

And why is this AI acceleration taking off right now? Open Google Maps and point it to California. San Francisco is so back! Extremely well-compensated & highly “agentic” people are shipping multiple updates per week — adding features, adding bugs, fixing them, doing marketing, collecting feedback in public, while CEOs do nonstop podcast tours. It’s a well-oiled tech + marketing machine, and it’s quite impressive in my professional opinion.

But it’s also “by design”. LLM architecture dates back to 2017 and earlier. They were a breakthrough in language processing, but once models got big enough to follow instructions reliably, we crossed a threshold from natural language to programming language. Agentic coding, multi-agent workflows, RAG, MCPs — it’s a long runway of “wrap the model in scaffolding and see what it can do”.

It’s shiny, and it wows people who didn’t grow up in traditional CS education. Don’t get me wrong, it’s super super cool. But it’s also a massive acceleration of automation capabilities that always existed, they just used to take far more human effort to build, maintain, and operate.

The 99% reality check

In the words of the great Grady Booch (whose textbooks I read in undergrad): the history of software engineering is one of rising levels of abstraction.

And yes, we’re living through one hell of an abstraction.

But for now, I don’t see this reducing work for most people. If anything, it’s more work to manage everything: prompts, tool configs, environments, flaky runs, regressions, and the constant temptation to keep “improving” the thing instead of finishing it.

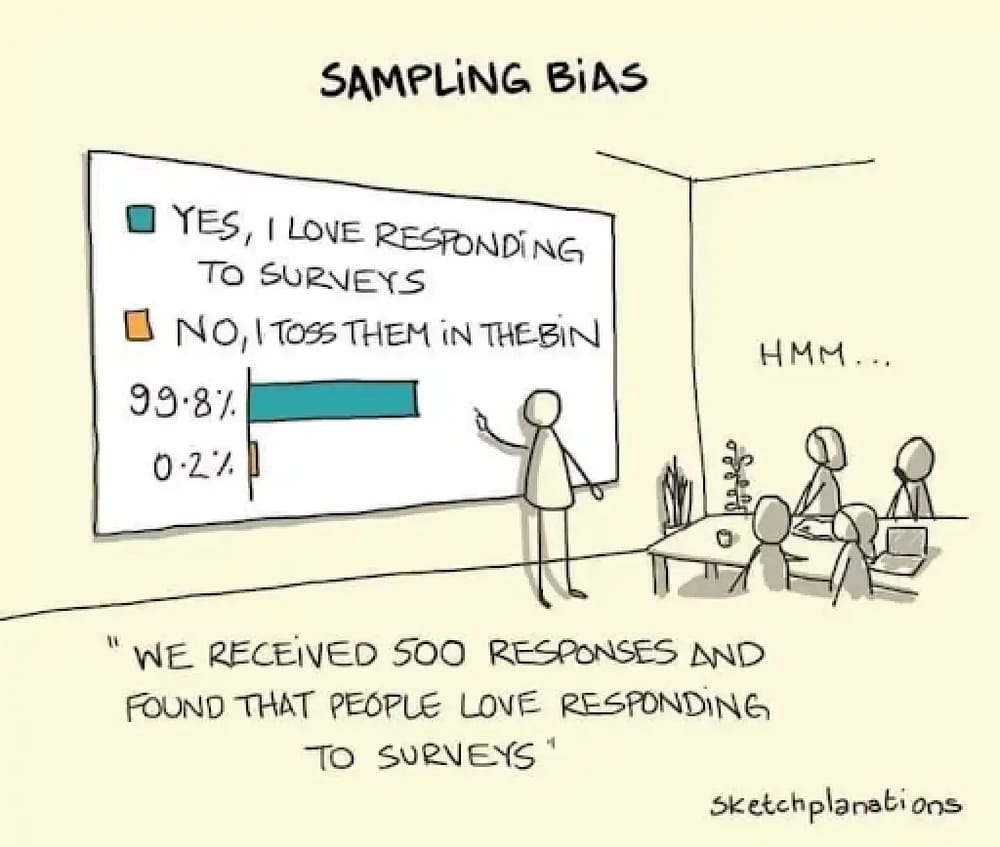

Once you get past the social media noise (“OMG look what I built, it’s magic”), you’ll see the 99% reality: tiny personal apps and prototypes, many of which may not work a couple of weeks from now, or will only work for the person who built them, on their machine, with their exact setup and context.

When Anthropic says they use Claude Code to build Claude Code, I believe them. They have the talent, the resources, the systems, the feedback loops, and the discipline to make it work. But that doesn’t mean the rest of us can replicate that consistently at scale.

So what counts as “real work” here? Not generating code. Not watching a terminal fly by. Real work is when the thing is reliable, understandable, maintainable, and useful, especially to someone other than you.

So where does that leave us?

Where does that leave someone like me? Someone who genuinely loves the tinkering, who spent formative years in the struggle, and now has access to tools that make the struggle optional?

I think the honest answer is that the struggle isn’t completely gone if you know what you’re doing. It’s just different now. The hard part used to be getting the code to compile, getting the OS to boot, getting the encryption algorithm to pass test vectors. Today, the problems are abstracted upwards - architecture choices, tech stack, actual user experience. And for my field of data science, it’s still just as hard, because “writing code” was never the problem anyways.

And for data scientists, the job always about judgement, about the data, the methods, the tradeoffs, the interpretation and the story telling. I think my job is safe in the long-term (definitely not in the short-term unfortunately).

I also think for some people (like me, in the echo chambers, loyal pro/max subscribers), the hard part now, is knowing when to stop. Knowing when your setup is “good enough” and it’s time to actually finish something. Knowing the difference between productive exploration and endless tinkering disguised as engineering. The tinkering trap isn’t that people are building things with AI. That’s genuinely fantastic. I love seeing people say “I built an app for myself”, especially when you’d never imagine them saying that a year ago.

The trap is more subtle: the dopamine arrives before the understanding. And if you’re not careful, you start mistaking:

activity for learning (“I shipped code, therefore I learned”)

output for usefulness (“it runs right now, for me, therefore it will be valuable, for others”)

I don’t know why, but in a weird way it feels to me like the TikTokification of coding. Short bursts of coding -> magic -> app that you love for a week -> dopamine high -> boredom -> new idea -> repeat the cycle. Maybe it’s just me, but this is what it feels like.

Even at companies, I think there will be a separation. Most companies will be lured in by the magic, fail to truly understand what they want, and try to squeeze every problem through the AI filter. A smaller set of companies will figure out scalable, cost-effective ways to use LLMs where they actually help, and still rely on the established principles of software engineering, data science, and domain expertise.

I hope to be on the side of the latter, from both a personal and professional standpoint. I’m having a lot of fun FAFOing around with Claude Code and Codex, but I’m paying close attention to these traps (and the inevitable enshittification of it all), and trying to see where this lands once the hype dissipates (if ever).

In a follow-up post, I’ll open-source my Claude / Codex setup for solo development and walk through what “good enough” looks like to me. A setup for tinkering around, but also to learn and build useful things at the personal scale as well as corporate scale.